Nowadays, companies feel the need for an agile, responsive, and scalable data stack. Data must be available at the right moment for consumption and it might not be the easiest task to develop a strategy around the continuous pipelines and the integrated applications to set up your stack.

Alteryx and the Snowflake Data Cloud offer a potential solution to this issue and can speed up your path to Analytics.

In this blog post, we will explore how Alteryx and Snowflake can accelerate your journey to Analytics by sharing use cases and best practices.

What is Alteryx?

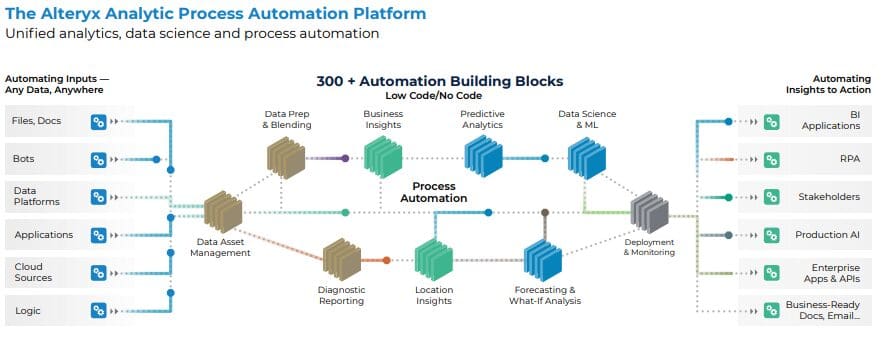

Alteryx is an analytic process automation (APA) platform with a focus on integrating data, processes, and people. Alteryx provides organizations with an opportunity to automate access to data, analytics, data science, and process automation all in one, end-to-end platform.

Its capabilities can be split into the following topics: automating inputs & outputs, data preparation, data enrichment, and data science.

What is Snowflake?

The Snowflake Data Cloud is a platform that delivers data with near-unlimited scale, concurrency, and performance. Organizations can unite their siloed data and securely share governed data while executing diverse analytic workloads. Snowflake’s engine provides a solution for data warehousing, data lakes, data engineering, data science, data application development, and data sharing.

It is a true SaaS offering, meaning there is no hardware (virtual or physical) or software to install, configure, or manage. Additionally, any maintenance and upgrades are handled by Snowflake.

How Can You Benefit From Alteryx & Snowflake Together?

When you think of the lifecycle of your data processes, Alteryx and Snowflake play different roles in a data stack. Alteryx provides the low-code intuitive user experience to build and automate data pipelines and analytics engineering transformation, while Snowflake can be part of the source or target data, depending on the situation.

The powerful processing engine of Snowflake can be leveraged with both platforms, and with different approaches. We will see how to explore these further.

Top Benefits of Leveraging Alteryx & Snowflake

- Unified Analytics Governance

- Centralized data source (single source of truth)

- Tighter control and governance of data

- Increase data productivity by eliminating barriers

- Accelerate breakthroughs through ease-of-access to high-quality data

- Unified Data Platform

- Data accuracy, security, and compliance

- Improved collaboration between teams

- Simplified management of data assets

- Reliable data for informed decision-making

- Amplified Human Output

- Data seamlessly reaches agile knowledge workers for faster insights

- SQL is not mandatory, ability to use drag and drop visual analytics experience

- Enriched analytics with supplemental, value-add data, including geospatial

- Extend the value of Snowflake data with user-friendly AI/ML building blocks

Leveraging Self-Service Analytics With Snowflake and Alteryx

Among the aforementioned benefits, Snowflake is an optimized platform for use with Alteryx due to its flexible deployment and security model. Though most companies will have their data deployments owned by a central IT team, the full value of platforms like Alteryx and Snowflake can be achieved by allowing the business to leverage Snowflake’s processing power for their own analytics.

Unlike many legacy database systems, Snowflake makes it very easy for platform owners to spin up separate databases for decentralized business teams. Platform owners can then partition ‘write’ access to the teams who are leveraging Alteryx to develop their use cases faster with the power of Snowflake.

You can then scale each of those team databases up and down independently, while also keeping them separate from any central or production spaces. This creates a space for business teams to write to Snowflake and accelerate their access to data, speeding up business reporting and data visualization.

Once users have a place to leverage and write to, there are a multitude of ways to then enhance how those users optimally leverage Snowflake in their Alteryx workflows.

Types of Workloads in Alteryx with Snowflake

The amount of workload will vary depending on your use case, but the Alteryx toolset has two different options for processing data from (and to) Snowflake. The two most common ways to work with data sources in Alteryx are Standard and In-Database, and both can be used when leveraging Snowflake.

Standard

Standard workflows provide a breadth of capability but do not leverage the computational power of an underlying data source. When using Standard tools, Alteryx queries the data source and brings data to in-memory processing at runtime. The key points to keep an efficient workload while using Standard building blocks would be following these best practices:

- Use data-reducing queries when using Standard building blocks to reduce the volume of data loaded into memory.

- Use a SAMPLE SQL clause to cut down on data size for an initial analysis.

- Use the standard blocks to prototype faster.

This method is easy to use and could work well for most use cases. It’s important to note that you will gain speed and execution efficiency by processing your Snowflake workloads with In-Database building blocks.

In addition to leveraging a powerful cloud warehouse like Snowflake, a few best practices can be followed to improve performance:

- Leverage In-Database building blocks early and often. This way data can be properly filtered and selected to be enriched using the Alteryx Standard tools.

- Stream the data back into Snowflake for final processing and storage.

Using In-Database where possible in Snowflake is considered a best practice.

Code-friendly Tools to Speed Up Data Integration

Using Alteryx and Snowflake together can help extend the reach and usefulness of both platforms. There are a variety of different tools within Alteryx to help you effectively use all of the functionality of Snowflake within Alteryx’s code-friendly set of tools.

SnowSQL

SnowSQL is Snowflake’s command line client for connecting to Snowflake to execute SQL queries and perform all DDL and DML operations, including loading and unloading data from database tables. It can be run as an interactive shell or in batch mode.

Using the Alteryx Run Command building block, you can create a workflow that can execute a SnowSQL script, giving you the ability to execute anything that SnowSQL supports. Executed scripts can be static or dynamic, you can also use a Formula building block to write a dynamic script that can be executed based on calculations or parameters.

Python

The Snowflake Connector for Python provides an interface for developing Python applications that can connect to Snowflake and perform all standard operations. It provides a programming alternative to developing applications in Java or C/C++ using the Snowflake JDBC or ODBC drivers.

Alteryx has a Python building block and a Python SDK, a flexible way to interact with a Snowflake instance and drive custom outcomes with the full scope of Python capabilities.

REST API

Snowflake has a REST API that you can use to access and update data in a Snowflake database. You can use this API to develop custom applications and integrations that perform queries, or manage your deployment (e.g. provision users and roles, create tables, etc.).

Alteryx can leverage any REST API through the Download building block. With this block, it is possible to retrieve data from a URL to use in downstream processing or to save to a file. Any GET, POST, PUT or DELETE command from the Snowflake REST API can be triggered using Alteryx, amplifying options to leverage Snowflake’s powerful engine.

Accelerating Data Load to Snowflake

At the end of your data pipeline in Alteryx, Snowflake is the perfect place to store your analytical data. To get your data into Snowflake, it’s important to understand the differences when picking between the two main options in your workflow’s Output building block: Insert and Bulk Loader.

Insert

It’s the most basic method and it’s used to insert a single record at a time. The general recommendation is to limit your use of this method to times when you are working with small datasets or instances where you are unable to use the Bulk Loader.

Bulk Loader

It should be your first option when outputting data to Snowflake. This method loads batches of data from files in cloud storage or by copying data files from a local machine (where Alteryx standardly processes) to an internal cloud storage location (Snowflake) before the data is loaded into tables using the COPY command. Alteryx supports outputs to all types of Snowflake stages, including external stages.

When writing large datasets to Snowflake, consider taking a few steps to improve the performance and resiliency of the process. To optimize the number of parallel operations for a load, Snowflake recommends aiming to produce data files roughly 100-250 MB in size compressed.

If the bulk load fails, you can try implementing a chunking strategy, where you load in chunks instead of the entire load again. In Alteryx, Batch Macros can be used to help you manage the bulk load.

Conclusion

Alteryx and Snowflake deliver powerful capabilities to speed up your data processes, but when combined, it’s possible to optimize even more the modern analytics lifecycle, improving user interactivity and guaranteeing scalability.

With Alteryx, it’s possible to speed up development and maintain an organized low-code repository that can be easily replicated and widely adopted by business users, while with Snowflake, you can take advantage of the powerful engine prepared to be scaled to secure your demands, using compute and storage in a decoupled architecture that can be adapted to custom solutions.

If you’re looking to expand your organization’s capabilities with Alteryx and Snowflake, phData can help! Explore our Alteryx and Snowflake consulting services today to bring your business closer to a data-driven future.